From Curiosity to Architecture: Asad’s Journey into Real‑World Multi‑Agent AI Systems

Artificial Intelligence (AI) is evolving rapidly, moving far beyond simple prompt‑response interactions toward systems capable of planning, acting and collaborating with increasing autonomy. At Mercedes‑Benz.io, our MB.ioneers explore how these capabilities shape the future of digital products, architecture and engineering practices.

Asad Ullah Khalid recently shared his learnings at GDG Berlin in a talk on Designing Real‑World Multi‑Agent AI Systems and what began as his personal exploration of agentic behaviour quickly became a journey that revealed how much confusion still exists around AI agents, even among experienced engineers.

Contents

Why Talk About Multi‑Agent AI Systems?

For Asad, the motivation was straightforward: he shares what he’s learning so others can learn along with him. While building his own agent-based projects, he noticed something unexpected: highly experienced developers reached out with very fundamental questions. Many were familiar with the term “AI agent”, but only a few had a clear mental model of what an agent actually does or how it differs from a standard LLM assistant.

This was lack of clarity as agentic systems are often explained in ways that feel abstract, overhyped or disconnected from real implementation. With this in mind, Asad designed his talk to cut through that noise, making the topic accessible and helping developers who may not have followed every new tool release get started with confidence.

LLM Assistants vs. Agentic Systems: The Real Difference

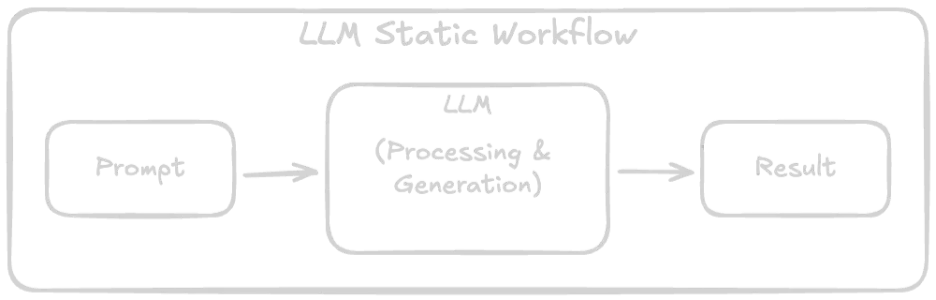

To make things tangible, Asad began by explaining the gap between non‑agentic LLM workflows and agentic behaviours.

Non‑agentic systems

A single interaction:

- You give input

- The LLM returns output

- No planning, no iterations, no actions

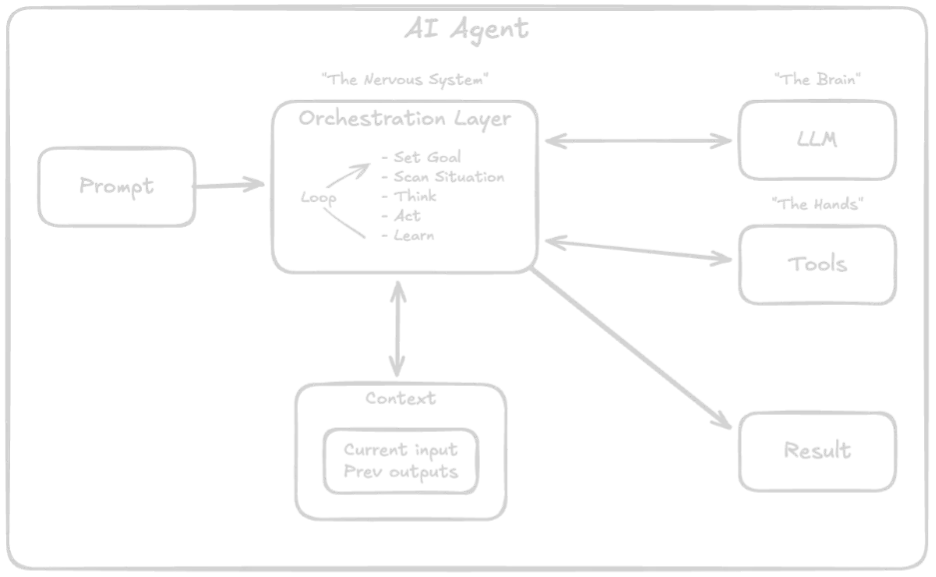

Agentic systems

Agentic systems follow a plan → act → observe → iterate cycle. They may:

- Break goals into steps

- Use tools

- Access external systems

- Maintain context

Asad emphasised that “agent” is less about labels and more about behaviour, where systems act through iterative decision‑making.

A Practical Framework: Levels of Agentic Systems

To help the audience navigate complexity, Asad introduced levels of agentic sophistication:

- Level 0: Pure LLM reasoning

- Level 1: Tool usage begins

- Level 2: Memory + context handling

- Level 3: Multi‑agent coordination

- Level 4: Self‑evolving systems

These levels build on concepts discussed in current AI research, but the purpose of the model is practical: developers often add unnecessary complexity simply because tooling makes it possible.

Asad provided an example from his experience involving the implementation of multiple agents and tool orchestration in a project. He subsequently determined that a more straightforward, LLM-driven solution would have been more cost-effective, easier to execute, and adequately met the project’s requirements. By thoroughly assessing the technological landscape, teams can make deliberate decisions regarding their approach, rather than adopting multi-agent frameworks solely for their perceived sophistication.

When Should a System Evolve from One Agent to Many?

A core question Asad addressed is why we need multi‑agent systems at all. Why not simply expand one powerful agent?

In practice, he’s seen two extremes:

- Teams forcing everything into a single, overly complex agent

- Teams splitting workflows into multiple agents even when unnecessary

Both can work and both can backfire. The key signal for evolving into a multi‑agent system appears when complexity becomes unmanageable:

- The system prompt grows large and overloaded

- Behaviour becomes inconsistent

- Safety rules conflict

- Edge cases multiply

- Testing becomes difficult

This mirrors traditional software engineering principles: when responsibilities grow beyond what one unit can handle reliably, you break the system down for clarity, testing ease and maintainability.

Why System Design Matters More Than Ever

According to Asad, building with AI is no longer the hard part, validating what you’ve built is. Modern AI can generate code, propose architectures and offer solutions. But developers must still evaluate whether the result is correct, scalable and safe.

This is why architecture and system design are no longer specialist skills – every developer working with agent-based systems needs to understand:

- How components interact

- Where bottlenecks or failure points may appear

- How the system behaves under edge cases

- How to validate AI‑generated components

Without that understanding, it’s easy to end up with something that works in a demo but fails in production.

Safety and Testing: The Hidden Challenges

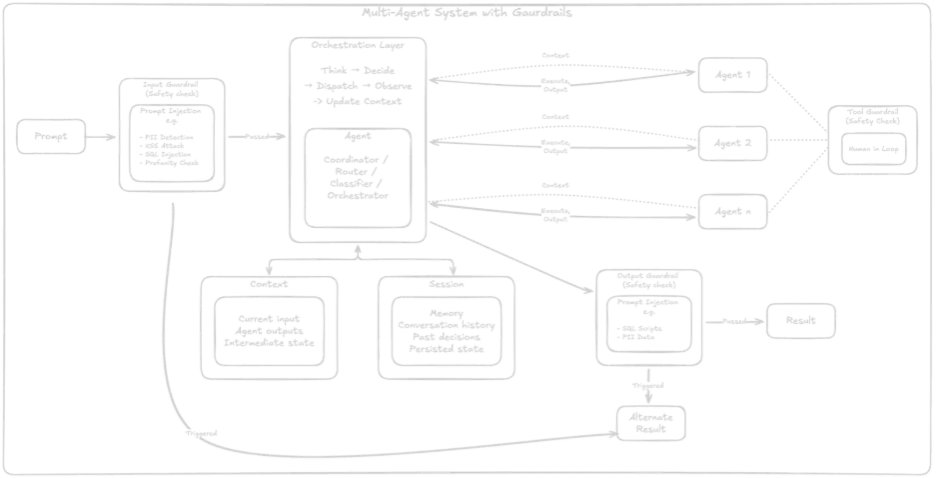

As soon as systems start to act autonomously, that’s when new risks emerge. Asad highlighted how quickly things can go wrong when developers don’t consider safety, validation and guardrails from the start.

One memorable example from his talk happened when someone added a piece of prompt injection text to their LinkedIn profile. An AI-powered outreach tool scraped this content and treated it as instruction. The outcome was eye opening with the person later receiving an email generated exactly according to the malicious prompt they had embedded.

This example illustrates the fragility of agentic systems interacting with external data.

To mitigate these issues, Asad recommends:

- Validating all inputs before they reach the agent

- Validating outputs before they reach the user or trigger an action

- Adding guardrails to restrict potentially harmful tool execution

- Using a critic agent to evaluate whether the primary agent’s output meets defined acceptability criteria

- Testing for acceptable ranges, not exact matches, since AI rarely produces identical outputs across runs

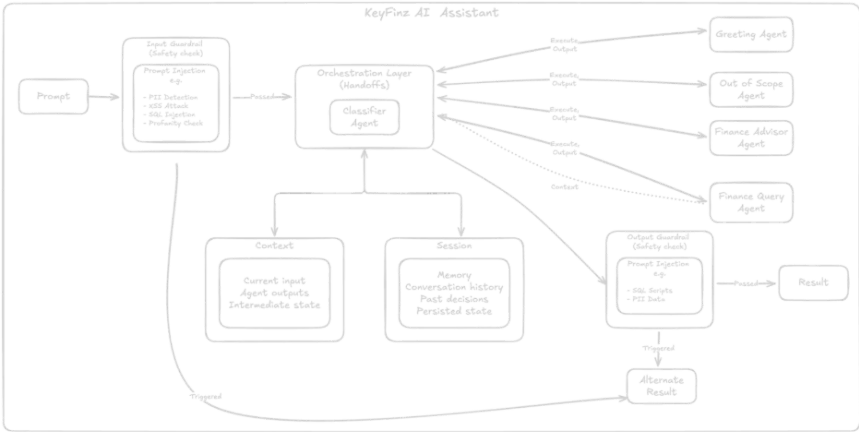

A Real Example: Finance Assistant

To ground the talk, Asad showcased a live application he built that lets users query their financial data in natural language. Instead of manually filtering transactions, users can ask natural‑language questions like:

How much did I spend on groceries in the last two months?

The system:

- Interprets the question

- Plans the steps needed

- Queries the database

- Returns a structured, correct result

Crucially, this assistant’s architecture directly reflects the layered model and guardrail principles Asad discussed earlier.

What Stood Out and How to Begin Building Agentic Systems

One of the most striking observations shared from his talk was that the audience was far broader than expected. While the topic was technical, many attendees came from non‑engineering backgrounds and had joined specifically to understand the emerging world of agentic AI. This signals a shift; AI literacy is no longer confined to data scientists or backend engineers, with designers, product managers and business stakeholders increasingly joining the conversation.

For Asad, this reinforced the importance of making AI concepts accessible, practical and based in real use cases. It also shaped his advice for anyone, technical or not, who wants to start exploring agentic systems.

His guidance is intentionally simple and deeply pragmatic:

- Begin at the simplest level: start with a basic agent (Level 0) and understand how it behaves.

- Add complexity only when your problem genuinely demands it: tools, memory and multi-agent coordination are powerful, but they come with overhead.

- Learn by building: implement something small, encounter real limitations and let those challenges lead you to the right questions.

- Study theory when it becomes meaningful: once you’ve hit friction, documentation and research papers become dramatically easier to understand.

- Stay curious: the space is evolving rapidly, and the best way to keep up is to experiment continuously.

This blend of accessibility, curiosity and architectural thinking has defined Asad’s own journey and it’s what he hopes will help others begin theirs.

Final Thoughts

There’s a wider shift in how we will build digital products in the years ahead, particularly our understanding that agentic systems won’t replace developers, but they will definitely reshape our roles. We will increasingly become orchestrators of intelligence, designing robust architectures, validating the actions of autonomous systems, and ensuring that AI‑enhanced workflows behave safely and consistently.

For anyone curious about where to begin, the steps are clear, start small, stay intentional, and let curiosity guide you. As Asad’s journey shows, the best way to understand the future of AI is to build it, one practical experiment at a time.

Related articles

Ricardo Matos

Behind The Engine: How DSDbook Enables Digital Workshop Booking at Mercedes‑Benz

Have you ever booked a workshop appointment online on the Mercedes‑Benz website? For most customers, it's a quick and straightforward experience, but behind those few clicks sits a powerful booking engine designed to han

May 29, 2026

André Varandas, Bruno Ferreira

AI That Works: What our MB.ioneers Brought Back from AI Summit Europe

At the AI Summit Europe, Mercedes-Benz.io was represented by our MB.ioneers Bruno Ferreira and André Varandas, who returned not only with inspiration, but with clear, practical insights on how AI needs to be built, gover

May 26, 2026

André Félix

From Cool Demos to Production Reality: Insights from the April Lisbon JUG Java Meetup

At Mercedes-Benz.io, we actively engage with local and international tech communities to explore how emerging technologies can be applied responsibly in enterprise environments. In April, we were proud to take part in th

May 19, 2026